Filter by

Take Better Risks: Figuring Out Where to Place Technology Bets with a Tech Adoption Rubric

design

Understanding Design Systems: Why They Matter and How They Can Transform Your Digital Experience

By: Julie

design

Rapid Wireframing: What It Is & How to Do It

By: Chelsea

Nonprofit tech strategy

RSS Feeds: A Simple Win for the Nonprofit Workflow

By: Olivia Oldach

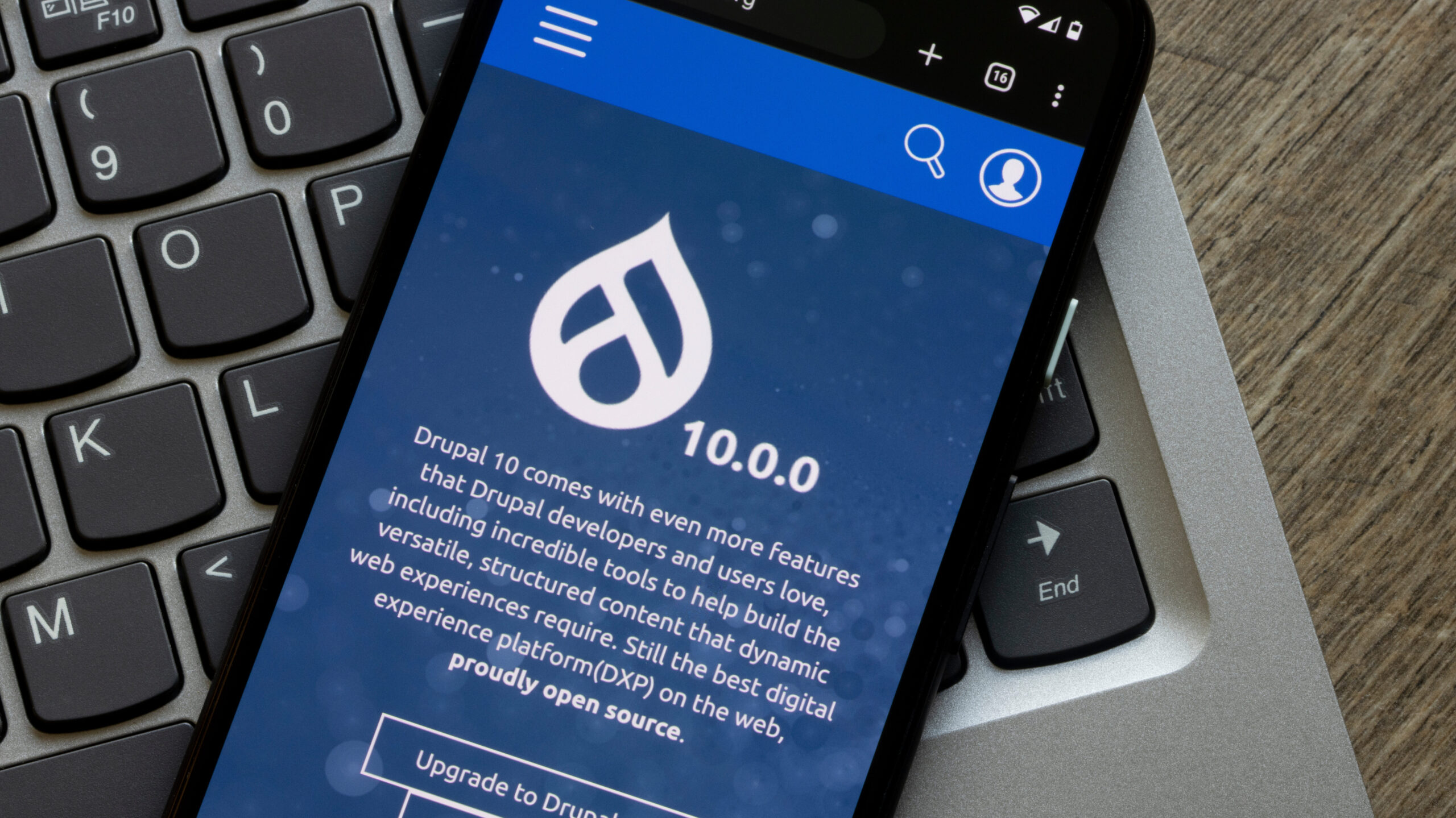

Drupal

Navigating the Transition: Upgrading to Drupal 10

By: Ben

ThinkShout Rebranded

By: Lev

digital strategy

Goodbye Email Open Rates: Prepare for Apple’s Privacy Update

By: Aneta

analytics

Google Analytics 4 Migration

By: Sabriya

DevOps

Tag! You’re a Release!

By: Joe